Because strings are such a huge problem nowadays, every single software developer needs to know the internals of them. I can’t even stress it enough, strings are such a burden nowadays that if you don’t know how to encode and decode one, you’re beyond fucked. It’ll make programming so difficult - no even worse, nigh impossible! Only those who know about unicode will be able to write any meaningful code.

currency symbols other than the $ (kind of tells you who invented computers, doesn’t it?)

Who wants to tell the author that not everything was invented in the US? (And computers certainly weren’t)

The stupid thing is, all the author had to do was write “kind of tells you who invented ASCII” and he’d have been 100% right in his logic and history.

Where were computers invented in your mind? You could define computer multiple ways but some of the early things we called computers were indeed invented in the US, at MIT in at least one case.

Well, it’s not really clear-cut, which is part of my point, but probably the 2 most significant people I could think of would be Babbage and Turing, both of whom were English. Definitely could make arguments about what is or isn’t considered a ‘computer’, to the point where it’s fuzzy, but regardless of how you look at it, ‘computers were invented in America’ is rather a stretch.

‘computers were invented in America’ is rather a stretch.

Which is why no one said that. I read most of the article and I’m still not sure what you were annoyed about. I didn’t see anything US-centric, or even anglocentric really.

To say I’m annoyed would be very much overstating it, just a (very minor) eye-roll at one small line in a generally very good article. Just the bit quoted:

currency symbols other than the $ (kind of tells you who invented computers, doesn’t it?)

So they could also be attributing it to some other country that uses

for their currency, which is a few, but it seems most likely to be suggesting USD.

I think the author’s intended implication is absolutely that it’s a dollar because the USA invented the computer. The two problems I have is that:

- He’s talking about the American Standard Code for Information Interchange, not computers at that point

- Brits or Germans invented the computer (although I can’t deny that most of today’s commercial computers trace back to the US)

It’s just a lazy bit of thinking in an otherwise excellent and internationally-minded article and so it stuck out to me too.

If you go to the page without the trailing slash, the images don’t load

Now this is UX. Wonderful stuff.

And the site’s dark mode is fantastic…

The only modern language that gets it right is Swift:

print("🤦🏼♂️".count) // => 1Minor, but I’m not sure this is as unambiguous as the article claims. It’s true that for someone “that isn’t burdened with computer internals” that this is the most obvious “length” of the string, but programmers are by definition burdened with computer internals. That’s not to say the length shouldn’t be 1 though, it’s more that the “length” field/property has a terrible name, and asking for the length of a string is a very ambiguous question to begin with.

Instead, I think a better solution is to be clear what length you’re actually referring to. For example, with Rust, the

.len()method documents itself as the number of bytes in the string and warns that it may not be what you’re interested in. Similarly,.chars()clarifies that it iterates over Unicode Scalar Values, and not grapheme clusters (and that grapheme clusters are unfortunately not handled by the standard library).For most high level applications, I think you generally do want to work with grapheme clusters, and what Swift does makes sense (assuming you can also iterate over the individual bytes somehow for low level operations). As long as it is clearly documented what your “length” refers to, and assuming the other lengths can be calculated, I think any reasonably useful length is valid.

The article they link in that section does cover a lot of the nuances between them, and is a great read for more discussion around what the length should be.

Edit: I should also add that Korean, for example, adds some additional complexity to it. For example, what’s the string length of 각? Is it 1, because it visually consumes a single “space”? Or is it 3 because it’s 3 letters (ㄱ, ㅏ, ㄱ)? Swift says the length is 1.

Just give me plain UTF32 with ~@4 billion code points, that really should be enough for any symbol ee can come up with. Give everything it’s own code point, no bullshit with combined glyphs that make text processing a nightmare. I need to be able to do a strlen either on byte length or amount of characters without the CPU spendings minute to count each individual character.

I think Unicode started as a great idea and the kind of blubbered into aimless “everybody kinda does what everyone wants” territory. Unicode is for humans, sure, but we shouldn’t forget that computers actually have to do the work

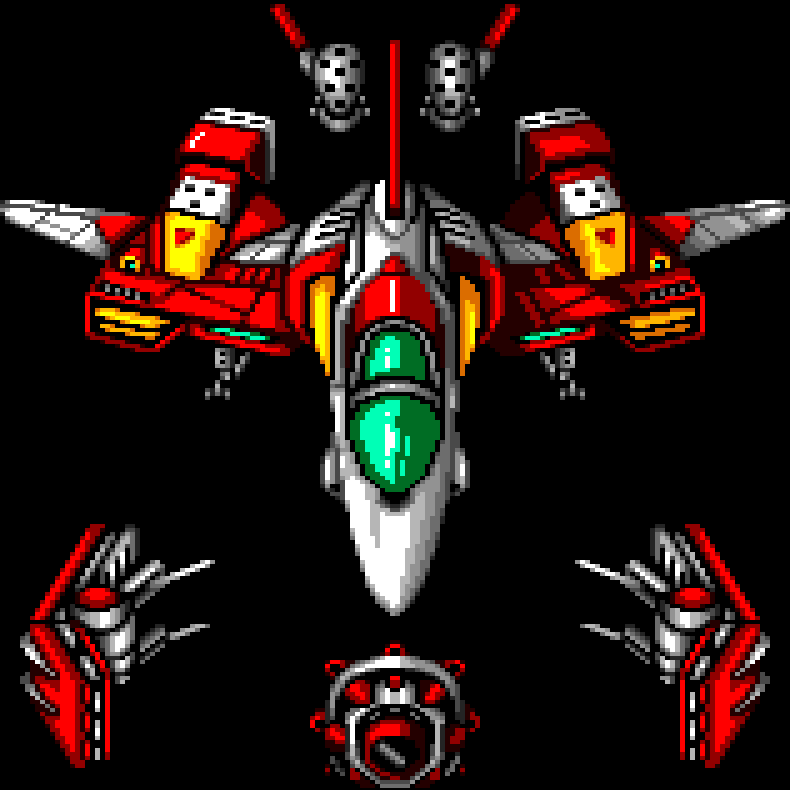

I love the comparison of string length of the same UTF-8 string in four programming languages (only the last one is correct, by the way):

Python 3:

len(“🤦🏼♂️”)

5

JavaScript / Java / C#:

“🤦🏼♂️”.length

7

Rust:

println!(“{}”, “🤦🏼♂️”.len());

17

Swift:

print(“🤦🏼♂️”.count)

1

That depends on your definition of correct lmao. Rust explicitly counts utf-8 scalar values, because that’s the length of the raw bytes contained in the string. There are many times where that value is more useful than the grapheme count.

The mouse pointer background is kinda a dick move. Good article. but the background is annoying for tired old eyes - which I assume are a target demographic for that article.

js console:

document.querySelector('.pointers').hidden=trueThank you for this! You can also get rid of it with a custom ad-blocker rule. I added these to uBlock Origin, and it totally kills the pointer thing.

wss://tonsky.me http://tonsky.me/pointers/ https://tonsky.me/pointers/