- cross-posted to:

- technology@beehaw.org

- cross-posted to:

- technology@beehaw.org

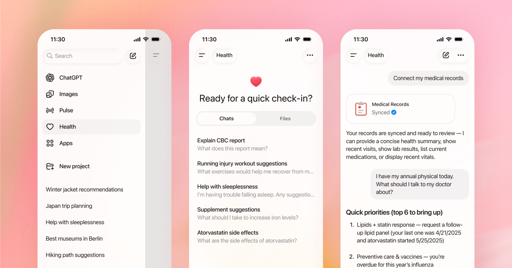

But it’s ‘not intended for diagnosis or treatment.’

Archived version: https://archive.is/20260107211857/https://www.theverge.com/ai-artificial-intelligence/857640/openai-launches-chatgpt-health-connect-medical-records

This will kill people I guarantee it

And OpenAI will take zero accountability

Since ChatGPT already does, why not?

Like, if a person did this kind of bullshit, they’d be put in jail. When Sam Altman does it, it’s a technological marvel.

Videos got too long. Im not short attention spanning for shorts but like a half hour is insane, I miss 7-12 minute videos.

Well, the short of it is; ChatGPT fed a man’s delusions of being targetted by them, and surviving multiple imagined assassination attempts, even pointing the finger to the man’s own mother, culminating in a murder-suicide.

It’s not the only time ChatGPT has coaxed someone into killing someone else either, the video brings up another example.

Nor is it the only time ChatGPT has coaxed someone into suicide. Wikipedia has started collating a list on the subject!

Absolutely fucking not. I don’t need a sycophantic hallucinatory robot telling me to drink bleach to cure my athlete’s foot.

HELL NO.

“Simply entrust your most sensitive health data to a for-profit AI company” - what could possibly go wrong?

A for profit AI company that doesn’t turn a profit.

Tech companies forgot what liability is, and given the lawyers they employ I assume they did the cost benefit analysis first.

deleted by creator

Big time HIPAA violation

Holy shit no

give us your data give us your data give us your data

Fuck every single thing about this.

With my whole chest absolutely the fuck not.

There is literally no way they will be able to prevent that from breaking the law.

Right after I finish connecting my bank account to North Korea.

probably more profitable

Do I get an option of lube before I bend over and take it in the ass?

Only in the premium plans.

🤣🤣🤣😭

That’s gonna be a no from me, dawg.